AP Statistics Curriculum 2007 ANOVA 2Way

From Socr

(New page: == General Advance-Placement (AP) Statistics Curriculum - Two-Way Analysis of Variance (ANOVA) == === Two-Way ANOVA === Example on how to attach images ...) |

|||

| Line 1: | Line 1: | ||

==[[AP_Statistics_Curriculum_2007 | General Advance-Placement (AP) Statistics Curriculum]] - Two-Way Analysis of Variance (ANOVA) == | ==[[AP_Statistics_Curriculum_2007 | General Advance-Placement (AP) Statistics Curriculum]] - Two-Way Analysis of Variance (ANOVA) == | ||

| - | + | In the [[AP_Statistics_Curriculum_2007_ANOVA_2Way |previous section]], we discussed statistical inference in comparing ''k'' independent samples separated by a single (grouping) factor. Now we will discuss variance decomposition of data into (independent/orthogonal) components when we have two (grouping) factors. Hence, this procedure called '''Two-Way Analysis of Variance'''. | |

| - | + | ||

| - | + | ||

| - | === | + | ===Motivational Example=== |

| - | + | Suppose 5 varieties of peas are currently being tested by a large agribusiness cooperative to determine which is best suited for production. A field was divided into 20 plots, with each variety of peas planted in four plots. The yields (in bushels of peas) produced from each plot are shown in two identical forms in the tables below. | |

| - | + | <center> | |

| + | {| class="wikitable" style="text-align:center; width:30%" border="1" | ||

| + | |- | ||

| + | | colspan=5| Variety of Pea | ||

| + | |- | ||

| + | | A || B || C || D || E | ||

| + | |- | ||

| + | | 26.2 || 29.2 || 29.1 || 21.3 || 20.1 | ||

| + | |- | ||

| + | | 24.3 || 28.1 || 30.8 || 22.4 || 19.3 | ||

| + | |- | ||

| + | | 21.8 || 27.3 || 33.9 || 24.3 || 19.9 | ||

| + | |- | ||

| + | | 28.1 || 31.2 || 32.8 || 21.8 || 22.1 | ||

| + | |} | ||

| + | <br> <br> | ||

| + | {| class="wikitable" style="text-align:center; width:30%" border="1" | ||

| + | |- | ||

| + | | A || 26.2,24.3,21.8,28.1 | ||

| + | |- | ||

| + | | B || 29.2,28.1,27.3,31.2 | ||

| + | |- | ||

| + | | C || 29.1,30.8,33.9,32.8 | ||

| + | |- | ||

| + | | D || 21.3,22.4,24.3,21.8 | ||

| + | |- | ||

| + | | E || 20.1,19.3,19.9,22.1 | ||

| + | |} | ||

| + | </center> | ||

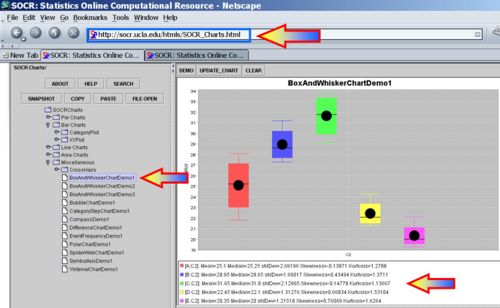

| - | + | Using the [http://socr.ucla.edu/htmls/SOCR_Charts.html SOCR Charts] (see [[SOCR_EduMaterials_Activities_BoxAndWhiskerChart | SOCR Box-and-Whisker Plot Activity]] and [[SOCR_EduMaterials_Activities_DotChart | Dot Plot Activity]]), we can generate plots that enable us to compare visually the yields of the 5 different types peas. | |

| - | + | ||

| - | * | + | <center>[[Image:SOCR_EBook_Dinov_ANOVA1_021708_Fig1.jpg|500px]]</center> |

| + | |||

| + | ===Two-Way ANOVA Calculations=== | ||

| + | |||

| + | Let's make the following notation: | ||

| + | : <math>y_{i,j}</math> = the measurement from ''group i'', ''observation-index j''. | ||

| + | : k = number of groups | ||

| + | : <math>n_i</math> = number of observations in group ''i'' | ||

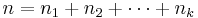

| + | : n = total number of observations, <math>n= n_1 + n_2 + \cdots + n_k</math> | ||

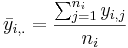

| + | : The group mean for group i is: <math>\bar{y}_{i,.} = {\sum_{j=1}^{n_i}{y_{i,j}} \over n_i}</math> | ||

| + | : The grand mean is: <math>\bar{y}=\bar{y}_{.,.} = {\sum_{i=1}^k {\sum_{j=1}^{n_i}{y_{i,j}}} \over n}</math> | ||

| + | |||

| + | : Two-way Model: <math>y_{i,j,k} = \mu +\tau_i +\beta_j +\gamma_{i,j} + \epsilon_{i,j,k}</math>, for all <math>1\leq i\leq a</math>, <math>1\leq j\leq b</math> and <math>1\leq k\leq r</math>. Here <math>\mu</math> is the overall mean response, <math>\tau_i</math> is the effect due to the <math>i^{th}</math> level of factor A, <math>\beta_j</math> is the effect due to the <math>j^{th}</math> level of factor B and <math>\gamma_{i,j}</math> is the effect due to any interaction between the <math>i^{th}</math> level of factor A and the <math>j^{th}</math> level of factor B. | ||

| + | |||

| + | When an <math>a \times b</math> factorial experiment is conducted with an equal number of observation per treatment combination, and where AB represents the interaction between A and B, the total (corrected) sum of squares is partitioned as: | ||

| + | |||

| + | <center><math>SS(Total) = SS(A) + SS(B) + SS(AB) + SSE</math></center> | ||

| + | |||

| + | ===Hypotheses=== | ||

| + | There are three sets of hypotheses with the two-way ANOVA. | ||

| + | |||

| + | ====The null hypotheses for each of the sets==== | ||

| + | * The population means of the first factor are equal. This is like the one-way ANOVA for the row factor. | ||

| + | * The population means of the second factor are equal. This is like the one-way ANOVA for the column factor. | ||

| + | * There is no interaction between the two factors. This is similar to performing a test for independence with contingency tables. | ||

| + | |||

| + | ====Factors==== | ||

| + | The two independent variables in a two-way ANOVA are called factors (denoted by A and B). The idea is that there are two variables, factors, which affect the dependent variable (Y). Each factor will have two or more levels within it, and the degrees of freedom for each factor is one less than the number of levels. | ||

| + | |||

| + | ====Treatment Groups==== | ||

| + | Treatement Groups are formed by making all possible combinations of the two factors. For example, if the first factor has 5 levels and the second factor has 6 levels, then there will be <math>5\times6=30</math> different treatment groups. | ||

| + | |||

| + | ====Main Effect==== | ||

| + | The main effect involves the independent variables one at a time. The interaction is ignored for this part. Just the rows or just the columns are used, not mixed. This is the part which is similar to the one-way analysis of variance. Each of the variances calculated to analyze the main effects are like the between variances | ||

| + | |||

| + | ====Interaction Effect==== | ||

| + | The interaction effect is the effect that one factor has on the other factor. The degrees of freedom here is the product of the two degrees of freedom for each factor. | ||

| + | |||

| + | ====Within Variation==== | ||

| + | The Within variation is the sum of squares within each treatment group. You have one less than the sample size (remember all treatment groups must have the same sample size for a two-way ANOVA) for each treatment group. The total number of treatment groups is the product of the number of levels for each factor. The within variance is the within variation divided by its degrees of freedom. The within group is also called the error. | ||

| + | |||

| + | ====F-Tests==== | ||

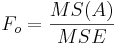

| + | There is an F-test for each of the hypotheses, and the F-test is the mean square for each main effect and the interaction effect divided by the within variance. The numerator degrees of freedom come from each effect, and the denominator degrees of freedom is the degrees of freedom for the within variance in each case. | ||

| + | |||

| + | ====Two-Way ANOVA Table==== | ||

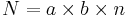

| + | It is assumed that main effect A has a levels (and A = a-1 df), main effect B has b levels (and B = b-1 df), n is the sample size of each treatment, and <math>N = a\times b\times n</math> is the total sample size. Notice the overall degrees of freedom is once again one less than the total sample size. | ||

| + | |||

| + | Source SS df MS F | ||

| + | Main Effect A given A, | ||

| + | a-1 SS / df MS(A) / MS(W) | ||

| + | Main Effect B given B, | ||

| + | b-1 SS / df MS(B) / MS(W) | ||

| + | Interaction Effect given A*B, | ||

| + | (a-1)(b-1) SS / df MS(A*B) / MS(W) | ||

| + | Within given N - ab, | ||

| + | ab(n-1) SS / df | ||

| + | Total sum of others N - 1, abn - 1 | ||

| + | <center> | ||

| + | {| class="wikitable" style="text-align:center; width:50%" border="1" | ||

| + | |- | ||

| + | | Variance Source || Degrees of Freedom (df) || Sum of Squares (SS) || Mean Sum of Squares (MS) || F-Statistics || [http://socr.ucla.edu/htmls/SOCR_Distributions.html P-value] | ||

| + | |- | ||

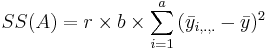

| + | | Main Effect A || df(A)=a-1 || <math>SS(A)=r\times b\times\sum_{i=1}^{a}{(\bar{y}_{i,.,.}-\bar{y})^2}</math> || <math>{SS(A)\over df(A)}</math> || <math>F_o = {MS(A)\over MSE}</math> || <math>P(F_{(df(A), df(E))} > F_o)</math> | ||

| + | |- | ||

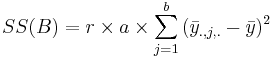

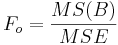

| + | | Main Effect B || df(B)=b-1 || <math>SS(B)=r\times a\times\sum_{j=1}^{b}{(\bar{y}_{., j,.}-\bar{y})^2}</math> || <math>{SS(B)\over df(B)}</math> || <math>F_o = {MS(B)\over MSE}</math> || <math>P(F_{(df(B), df(E))} > F_o)</math> | ||

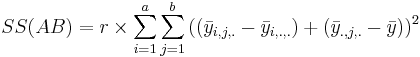

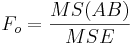

| + | | A vs.B Interaction || df(AB)=(a-1)(b-1) || <math>SS(AB)=r\times \sum_{i=1}^{a}{\sum_{j=1}^{b}{((\bar{y}_{i, j,.}-\bar{y}_{i, .,.})+(\bar{y}_{., j,.}-\bar{y}))^2}}</math> || <math>{SS(AB)\over df(AB)}}</math> || <math>F_o = {MS(AB)\over MSE}</math> || <math>P(F_{(df(AB), df(E))} > F_o)</math> | ||

| + | |- | ||

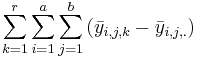

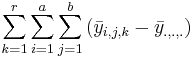

| + | | Error || <math>N-a\times b</math> || <math>\sum_{k=1}^r{\sum_{i=1}^{a}{\sum_{j=1}^{b}{(\bar{y}_{i, j,k}-\bar{y}_{i, j,.})}}}</math> || <math>{SSE\over df(Error)}</math> || || | ||

| + | |- | ||

| + | | Total || N-1 || <math>\sum_{k=1}^r{\sum_{i=1}^{a}{\sum_{j=1}^{b}{(\bar{y}_{i, j,k}-\bar{y}_{., .,.})}}}</math> || || || [[SOCR_EduMaterials_AnalysisActivities_ANOVA_2 | ANOVA Activity]] | ||

| + | |} | ||

| + | </center> | ||

| + | |||

| + | To compute the difference between the means, we will compare each group mean to the grand mean. | ||

| + | |||

| + | |||

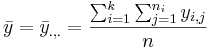

| + | ===SOCR ANOVA Calculations=== | ||

| + | [http://socr.ucla.edu/htmls/SOCR_Analyses.html SOCR Analyses] provide the tools to compute the [[SOCR_EduMaterials_AnalysisActivities_ANOVA_1 |1-way ANOVA]]. For example, the ANOVA for the peas data above may be easily computed - see the image below. Note that SOCR ANOVA requires the data to be entered in this format: | ||

| + | <center> | ||

| + | {| class="wikitable" style="text-align:center; width:30%" border="1" | ||

| + | |}</center> | ||

| + | |||

| + | <center>[[Image:SOCR_EBook_Dinov_ANOVA1_021708_Fig2.jpg|500px]]</center> | ||

| - | |||

| - | |||

===Examples=== | ===Examples=== | ||

| - | |||

| - | + | ====TBD==== | |

| - | + | ||

| - | === | + | |

| - | + | ||

| - | * | + | |

| + | ===Two-Way ANOVA Conditions=== | ||

| + | The Two-way ANOVA is valid if: | ||

| + | * The populations from which the samples were obtained must be normally or approximately normally distributed. | ||

| + | * The samples must be independent. | ||

| + | * The variances of the populations must be equal. | ||

| + | * The groups must have the same sample size. | ||

<hr> | <hr> | ||

| + | |||

===References=== | ===References=== | ||

| - | |||

<hr> | <hr> | ||

Revision as of 01:14, 20 February 2008

Contents |

General Advance-Placement (AP) Statistics Curriculum - Two-Way Analysis of Variance (ANOVA)

In the previous section, we discussed statistical inference in comparing k independent samples separated by a single (grouping) factor. Now we will discuss variance decomposition of data into (independent/orthogonal) components when we have two (grouping) factors. Hence, this procedure called Two-Way Analysis of Variance.

Motivational Example

Suppose 5 varieties of peas are currently being tested by a large agribusiness cooperative to determine which is best suited for production. A field was divided into 20 plots, with each variety of peas planted in four plots. The yields (in bushels of peas) produced from each plot are shown in two identical forms in the tables below.

| Variety of Pea | ||||

| A | B | C | D | E |

| 26.2 | 29.2 | 29.1 | 21.3 | 20.1 |

| 24.3 | 28.1 | 30.8 | 22.4 | 19.3 |

| 21.8 | 27.3 | 33.9 | 24.3 | 19.9 |

| 28.1 | 31.2 | 32.8 | 21.8 | 22.1 |

| A | 26.2,24.3,21.8,28.1 |

| B | 29.2,28.1,27.3,31.2 |

| C | 29.1,30.8,33.9,32.8 |

| D | 21.3,22.4,24.3,21.8 |

| E | 20.1,19.3,19.9,22.1 |

Using the SOCR Charts (see SOCR Box-and-Whisker Plot Activity and Dot Plot Activity), we can generate plots that enable us to compare visually the yields of the 5 different types peas.

Two-Way ANOVA Calculations

Let's make the following notation:

- yi,j = the measurement from group i, observation-index j.

- k = number of groups

- ni = number of observations in group i

- n = total number of observations,

- The group mean for group i is:

- The grand mean is:

- Two-way Model: yi,j,k = μ + τi + βj + γi,j + εi,j,k, for all

,

,  and

and  . Here μ is the overall mean response, τi is the effect due to the ith level of factor A, βj is the effect due to the jth level of factor B and γi,j is the effect due to any interaction between the ith level of factor A and the jth level of factor B.

. Here μ is the overall mean response, τi is the effect due to the ith level of factor A, βj is the effect due to the jth level of factor B and γi,j is the effect due to any interaction between the ith level of factor A and the jth level of factor B.

When an  factorial experiment is conducted with an equal number of observation per treatment combination, and where AB represents the interaction between A and B, the total (corrected) sum of squares is partitioned as:

factorial experiment is conducted with an equal number of observation per treatment combination, and where AB represents the interaction between A and B, the total (corrected) sum of squares is partitioned as:

Hypotheses

There are three sets of hypotheses with the two-way ANOVA.

The null hypotheses for each of the sets

- The population means of the first factor are equal. This is like the one-way ANOVA for the row factor.

- The population means of the second factor are equal. This is like the one-way ANOVA for the column factor.

- There is no interaction between the two factors. This is similar to performing a test for independence with contingency tables.

Factors

The two independent variables in a two-way ANOVA are called factors (denoted by A and B). The idea is that there are two variables, factors, which affect the dependent variable (Y). Each factor will have two or more levels within it, and the degrees of freedom for each factor is one less than the number of levels.

Treatment Groups

Treatement Groups are formed by making all possible combinations of the two factors. For example, if the first factor has 5 levels and the second factor has 6 levels, then there will be  different treatment groups.

different treatment groups.

Main Effect

The main effect involves the independent variables one at a time. The interaction is ignored for this part. Just the rows or just the columns are used, not mixed. This is the part which is similar to the one-way analysis of variance. Each of the variances calculated to analyze the main effects are like the between variances

Interaction Effect

The interaction effect is the effect that one factor has on the other factor. The degrees of freedom here is the product of the two degrees of freedom for each factor.

Within Variation

The Within variation is the sum of squares within each treatment group. You have one less than the sample size (remember all treatment groups must have the same sample size for a two-way ANOVA) for each treatment group. The total number of treatment groups is the product of the number of levels for each factor. The within variance is the within variation divided by its degrees of freedom. The within group is also called the error.

F-Tests

There is an F-test for each of the hypotheses, and the F-test is the mean square for each main effect and the interaction effect divided by the within variance. The numerator degrees of freedom come from each effect, and the denominator degrees of freedom is the degrees of freedom for the within variance in each case.

Two-Way ANOVA Table

It is assumed that main effect A has a levels (and A = a-1 df), main effect B has b levels (and B = b-1 df), n is the sample size of each treatment, and  is the total sample size. Notice the overall degrees of freedom is once again one less than the total sample size.

is the total sample size. Notice the overall degrees of freedom is once again one less than the total sample size.

Source SS df MS F Main Effect A given A, a-1 SS / df MS(A) / MS(W) Main Effect B given B, b-1 SS / df MS(B) / MS(W) Interaction Effect given A*B, (a-1)(b-1) SS / df MS(A*B) / MS(W) Within given N - ab, ab(n-1) SS / df Total sum of others N - 1, abn - 1

| Variance Source | Degrees of Freedom (df) | Sum of Squares (SS) | Mean Sum of Squares (MS) | F-Statistics | P-value | ||||||

| Main Effect A | df(A)=a-1 |  |  |  | P(F(df(A),df(E)) > Fo) | ||||||

| Main Effect B | df(B)=b-1 |  |  |  | P(F(df(B),df(E)) > Fo) | A vs.B Interaction | df(AB)=(a-1)(b-1) |  | Failed to parse (syntax error): {SS(AB)\over df(AB)}} |  | P(F(df(AB),df(E)) > Fo) |

| Error |  |  |  | ||||||||

| Total | N-1 |  | ANOVA Activity |

To compute the difference between the means, we will compare each group mean to the grand mean.

SOCR ANOVA Calculations

SOCR Analyses provide the tools to compute the 1-way ANOVA. For example, the ANOVA for the peas data above may be easily computed - see the image below. Note that SOCR ANOVA requires the data to be entered in this format:

Examples

TBD

Two-Way ANOVA Conditions

The Two-way ANOVA is valid if:

- The populations from which the samples were obtained must be normally or approximately normally distributed.

- The samples must be independent.

- The variances of the populations must be equal.

- The groups must have the same sample size.

References

- SOCR Home page: http://www.socr.ucla.edu

Translate this page: