AP Statistics Curriculum 2007 Bayesian Prelim

From Socr

| Line 7: | Line 7: | ||

In words, "the probability of event A occurring given that event B occurred is equal to the probability of event B occurring given that event A occurred times the probability of event A occurring divided by the probability that event B occurs." | In words, "the probability of event A occurring given that event B occurred is equal to the probability of event B occurring given that event A occurred times the probability of event A occurring divided by the probability that event B occurs." | ||

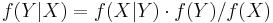

| - | Bayes Theorem can also be written in terms of densities over continuous random variables. So, if <math>f( | + | Bayes Theorem can also be written in terms of densities over continuous random variables. So, if <math>f(\cdot)</math> is some density, and <math>X</math> and <math>Y</math> are random variables, then we can say |

<math>f(Y|X) = f(X|Y) \cdot f(Y) / f(X)</math> | <math>f(Y|X) = f(X|Y) \cdot f(Y) / f(X)</math> | ||

Revision as of 18:41, 23 July 2009

Bayes Theorem

Bayes theorem can be stated succinctly by the equality

P(A | B) = P(B | A) * P(A) / P(B)

In words, "the probability of event A occurring given that event B occurred is equal to the probability of event B occurring given that event A occurred times the probability of event A occurring divided by the probability that event B occurs."

Bayes Theorem can also be written in terms of densities over continuous random variables. So, if  is some density, and X and Y are random variables, then we can say

is some density, and X and Y are random variables, then we can say

is associated with probability statements that relate conditional and marginal properties of two random events. These statements are often written in the form "the probability of A, given B" and denoted P(A|B) = P(B|A)*P(A)/P(B) where P(B) not equal to 0.

P(A) is often known as the Prior Probability (or as the Marginal Probability)

P(A|B) is known as the Posterior Probability (Conditional Probability)

P(B|A) is the conditional probability of B given A (also known as the likelihood function)

P(B) is the prior on B and acts as the normalizing constant. In the Bayesian framework, the posterior probability is equal to the prior belief on A times the likelihood function given by P(B|A).