AP Statistics Curriculum 2007 GLM Corr

From Socr

| Line 1: | Line 1: | ||

==[[AP_Statistics_Curriculum_2007 | General Advance-Placement (AP) Statistics Curriculum]] - Correlation == | ==[[AP_Statistics_Curriculum_2007 | General Advance-Placement (AP) Statistics Curriculum]] - Correlation == | ||

| - | === | + | Many biomedical, social, engineering and science applications involve the analysis of relationships, if any, between two or more variables involved in the process of interest. We begin with the simplest of all situations where bivariate data (''X'' and ''Y'') are measured for a process and we are interested on determining the association, relation or an appropriate model for these observations (e.g., fitting a straight line to the pairs of (''X,Y'') data). If we are successful determining a relationship between ''X'' and ''Y'', we can use this model to make predictions - i.e., given a value of ''X'' predict a corresponding ''Y'' response. Note that in this design, data consists of paired observations (''X,Y'') - for example, the [[SOCR_Data_Dinov_020108_HeightsWeights | height and weight of individuals]]. |

| - | + | ||

| + | ===Lines in 2D=== | ||

| + | There are 3 types of lines in 2D planes - Vertical Lines, Horizontal Lines and Oblique Lines. In general, the mathematical representation of lines in 2D is given by equations like <math>aX + bY=c</math>, most frequently expressed as <math>Y=aX + b</math>, provides the line is not vertical. | ||

| + | |||

| + | Recall that there is a one-to-one correspondence between any line in 2D and (linear) equations of the form | ||

| + | : If the line is '''vertical''' (<math>X_1 =X_2</math>): <math>X=X_1</math>; | ||

| + | : If the line is '''horizontal''' (<math>Y_1 =Y_2</math>): <math>Y=Y_1</math>; | ||

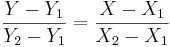

| + | : Otherwise ('''oblique''' line): <math>{Y-Y_1 \over Y_2-Y_1}= {X-X_1 \over X_2-X_1}</math>, (for <math>X_1\not=X_2</math> and <math>Y_1\not=Y_2</math>) | ||

| + | where <math>(X_1,Y_1)</math> and <math>(X_2, Y_2)</math> are two points on the line of interest (2-distinct points in 2D determine a unique line). | ||

| + | |||

| + | * Try drawing the following lines manually and [http://www.pserc.cornell.edu/pserc/java/graph/examples/parse1d.html using this applet]: | ||

| + | : Y=2X+1 | ||

| + | : Y=-3X-5 | ||

| + | |||

| + | === The Correlation Coefficient=== | ||

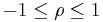

| + | '''Correlation coefficient''' (<math>-1 \leq \rho \leq 1</math>) is a measure of linear association, or clustering around a line of multivariate data. The main relationship between two variables (''X, Y'') can be summarized by: <math>(\mu_X, \sigma_X)</math>, <math>(\mu_Y, \sigma_Y)</math> and the correlation coefficient, denoted by <math>\rho=\rho_{(X,Y)}=R(X,Y)</math>. | ||

| + | |||

| + | * If <math>\rho=1</math>, we have a perfect positive correlation (straight line relationship between the two variables) | ||

| + | * If <math>\rho=0</math>, there is no correlation (random cloud scatter), i.e., no ''linear'' relation between ''X'' and ''Y''. | ||

| + | * If <math>\rho= –1</math>, there is a perfect negative correlation between the variables. | ||

| + | |||

| + | ====Computing <math>\rho=R(X,Y)</math>==== | ||

| + | The protocol for computing the correlation involves standardizing, multiplication and averaging. | ||

| + | |||

| + | * In general, for any [[AP_Statistics_Curriculum_2007_Distrib_RV | random variable]]: | ||

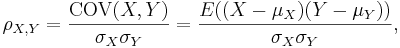

| + | :<math>\rho_{X,Y}={\mathrm{COV}(X,Y) \over \sigma_X \sigma_Y} ={E((X-\mu_X)(Y-\mu_Y)) \over \sigma_X\sigma_Y},</math> | ||

| + | where ''E'' is the [[AP_Statistics_Curriculum_2007_Distrib_MeanVar | expected value]] operator and ''COV'' means [[AP_Statistics_Curriculum_2007_Distrib_MeanVar#Properties_of_Variance | covariance]]. | ||

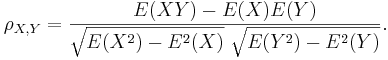

| + | Since μ<sub>''X''</sub> = E(''X''), σ<sub>''X''</sub><sup>2</sup> = E(''X''<sup>2</sup>) − E<sup>2</sup>(''X'') and similarly for ''Y'', we may also write | ||

| + | |||

| + | :<math>\rho_{X,Y}=\frac{E(XY)-E(X)E(Y)}{\sqrt{E(X^2)-E^2(X)}~\sqrt{E(Y^2)-E^2(Y)}}.</math> | ||

| + | |||

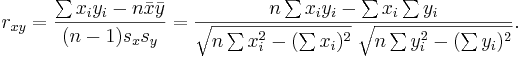

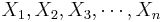

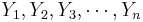

| + | * '''Sample correlation''' - we only have sampled data - we replace the (unknown) expectations and standard deviations by their sample analogues (sample-mean and sample-standard deviation) to compute the sample correlation correlation: | ||

| + | |||

| + | : Suppose {<math>X_1, X_2, X_3, \cdots, X_n</math>} and {<math>Y_1, Y_2, Y_3, \cdots, Y_n</math>} are bivariate observations of the same process and <math>(\mu_X, \sigma_X)</math> and <math>(\mu_Y, \sigma_Y)</math> are the means and standard deviations for the X and Y measurements, respectively. | ||

| + | |||

| + | : <math> r_{xy}=\frac{\sum x_iy_i-n \bar{x} \bar{y}}{(n-1) s_x s_y}=\frac{n\sum x_iy_i-\sum x_i\sum y_i} {\sqrt{n\sum x_i^2-(\sum x_i)^2}~\sqrt{n\sum y_i^2-(\sum y_i)^2}}. </math> | ||

| + | |||

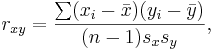

| + | :<math> r_{xy}=\frac{\sum (x_i-\bar{x})(y_i-\bar{y})}{(n-1) s_x s_y}, </math> | ||

| + | |||

| + | where <math>\bar{x}</math> and <math>\bar{y}</math> are the sample means of ''X'' and ''Y'' , ''s''<sub>''x''</sub> and ''s''<sub>''y''</sub> are the sample standard deviations of ''X'' and ''Y'' and the sum is from ''i'' = 1 to ''n''. We may rewrite this as | ||

| + | |||

| + | :<math> r_{xy}=\frac{\sum x_iy_i-n \bar{x} \bar{y}}{(n-1) s_x s_y}=\frac{n\sum x_iy_i-\sum x_i\sum y_i} {\sqrt{n\sum x_i^2-(\sum x_i)^2}~\sqrt{n\sum y_i^2-(\sum y_i)^2}}. </math> | ||

| + | |||

| + | * Note: The correlation is defined only if both of the standard deviations are finite and both of them are nonzero. It is a corollary of the [http://en.wikipedia.org/wiki/Cauchy-Schwarz_inequality Cauchy-Schwarz inequality] that the correlation is always bound <math>-1 \leq \rho \leq 1</math>. | ||

| + | |||

<center>[[Image:AP_Statistics_Curriculum_2007_IntroVar_Dinov_061407_Fig1.png|500px]]</center> | <center>[[Image:AP_Statistics_Curriculum_2007_IntroVar_Dinov_061407_Fig1.png|500px]]</center> | ||

Revision as of 04:50, 17 February 2008

Contents |

General Advance-Placement (AP) Statistics Curriculum - Correlation

Many biomedical, social, engineering and science applications involve the analysis of relationships, if any, between two or more variables involved in the process of interest. We begin with the simplest of all situations where bivariate data (X and Y) are measured for a process and we are interested on determining the association, relation or an appropriate model for these observations (e.g., fitting a straight line to the pairs of (X,Y) data). If we are successful determining a relationship between X and Y, we can use this model to make predictions - i.e., given a value of X predict a corresponding Y response. Note that in this design, data consists of paired observations (X,Y) - for example, the height and weight of individuals.

Lines in 2D

There are 3 types of lines in 2D planes - Vertical Lines, Horizontal Lines and Oblique Lines. In general, the mathematical representation of lines in 2D is given by equations like aX + bY = c, most frequently expressed as Y = aX + b, provides the line is not vertical.

Recall that there is a one-to-one correspondence between any line in 2D and (linear) equations of the form

- If the line is vertical (X1 = X2): X = X1;

- If the line is horizontal (Y1 = Y2): Y = Y1;

- Otherwise (oblique line):

, (for

, (for  and

and  )

)

where (X1,Y1) and (X2,Y2) are two points on the line of interest (2-distinct points in 2D determine a unique line).

- Try drawing the following lines manually and using this applet:

- Y=2X+1

- Y=-3X-5

The Correlation Coefficient

Correlation coefficient ( ) is a measure of linear association, or clustering around a line of multivariate data. The main relationship between two variables (X, Y) can be summarized by: (μX,σX), (μY,σY) and the correlation coefficient, denoted by ρ = ρ(X,Y) = R(X,Y).

) is a measure of linear association, or clustering around a line of multivariate data. The main relationship between two variables (X, Y) can be summarized by: (μX,σX), (μY,σY) and the correlation coefficient, denoted by ρ = ρ(X,Y) = R(X,Y).

- If ρ = 1, we have a perfect positive correlation (straight line relationship between the two variables)

- If ρ = 0, there is no correlation (random cloud scatter), i.e., no linear relation between X and Y.

- If Failed to parse (lexing error): \rho= –1

, there is a perfect negative correlation between the variables.

Computing ρ = R(X,Y)

The protocol for computing the correlation involves standardizing, multiplication and averaging.

- In general, for any random variable:

where E is the expected value operator and COV means covariance. Since μX = E(X), σX2 = E(X2) − E2(X) and similarly for Y, we may also write

- Sample correlation - we only have sampled data - we replace the (unknown) expectations and standard deviations by their sample analogues (sample-mean and sample-standard deviation) to compute the sample correlation correlation:

- Suppose {

} and {

} and { } are bivariate observations of the same process and (μX,σX) and (μY,σY) are the means and standard deviations for the X and Y measurements, respectively.

} are bivariate observations of the same process and (μX,σX) and (μY,σY) are the means and standard deviations for the X and Y measurements, respectively.

where  and

and  are the sample means of X and Y , sx and sy are the sample standard deviations of X and Y and the sum is from i = 1 to n. We may rewrite this as

are the sample means of X and Y , sx and sy are the sample standard deviations of X and Y and the sum is from i = 1 to n. We may rewrite this as

- Note: The correlation is defined only if both of the standard deviations are finite and both of them are nonzero. It is a corollary of the Cauchy-Schwarz inequality that the correlation is always bound

.

.

Approach

Models & strategies for solving the problem, data understanding & inference.

- TBD

Model Validation

Checking/affirming underlying assumptions.

- TBD

Computational Resources: Internet-based SOCR Tools

- TBD

Examples

Computer simulations and real observed data.

- TBD

Hands-on activities

Step-by-step practice problems.

- TBD

References

- TBD

- SOCR Home page: http://www.socr.ucla.edu

Translate this page: