AP Statistics Curriculum 2007 NonParam ANOVA

From Socr

| (12 intermediate revisions not shown) | |||

| Line 1: | Line 1: | ||

| - | [[AP_Statistics_Curriculum_2007 | General Advance-Placement (AP) Statistics Curriculum]] - Means of Several Independent Samples | + | ==[[AP_Statistics_Curriculum_2007 | General Advance-Placement (AP) Statistics Curriculum]] - Means of Several Independent Samples== |

| - | + | In this section we extend the [[EBook#Chapter_XI:_Analysis_of_Variance_.28ANOVA.29 | multi-sample inference which we discussed in the ANOVA section]], to the situation where the [[AP_Statistics_Curriculum_2007_ANOVA_1Way#ANOVA_Conditions| ANOVA assumptions]] are invalid. Hence we use a non-parametric analysis to study differences in centrality between two or more populations. | |

| - | + | ||

| - | == | + | ===Motivational Example=== |

| - | + | Suppose four groups of students are randomly assigned to be taught with four different techniques, and their achievement test scores are recorded. Are the distributions of test scores the same, or do they differ in location? The data is presented in the table below. | |

| - | == | + | <center> |

| - | + | {| class="wikitable" style="text-align:center; width:35%" border="1" | |

| + | |- | ||

| + | | colspan=5| Teaching Method | ||

| + | |- | ||

| + | | || '''Method 1''' || '''Method 2''' || '''Method 3''' || '''Method 4''' | ||

| + | |- | ||

| + | | rowspan=4| Index || 65 || 75 || 59 || 94 | ||

| + | |- | ||

| + | | 87 || 69 || 78 || 89 | ||

| + | |- | ||

| + | | 73 || 83 || 67 || 80 | ||

| + | |- | ||

| + | | 79 || 81 || 62 || 88 | ||

| + | |} | ||

| + | </center> | ||

| - | + | The small sample sizes and the lack of distribution information of each sample illustrate how ANOVA may not be appropriate for analyzing these types of data. | |

| - | + | ||

| - | == | + | ==The Kruskal-Wallis Test== |

| - | + | '''Kruskal-Wallis One-Way Analysis of Variance''' by ranks is a non-parametric method for testing equality of two or more population medians. Intuitively, it is identical to a [[AP_Statistics_Curriculum_2007_ANOVA_1Way | One-Way Analysis of Variance]] with the raw data (observed measurements) replaced by their ranks. | |

| + | |||

| + | Since it is a non-parametric method, the Kruskal-Wallis Test '''does not''' assume a normal population, unlike the analogous one-way ANOVA. However, the test does assume identically-shaped distributions for all groups, except for any difference in their centers (e.g., medians). | ||

| + | |||

| + | ==Calculations== | ||

| + | Let ''N'' be the total number of observations, then <math>N = \sum_{i=1}^k {n_i}</math>. | ||

| + | |||

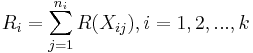

| + | Let <math>R(X_{ij})</math> denotes the rank assigned to <math>X_{ij}</math> and let <math>R_i</math> be the sum of ranks assigned to the <math>i^{th}</math> sample. | ||

| + | |||

| + | : <math>R_i = \sum_{j=1}^{n_i} {R(X_{ij})}, i = 1, 2, ... , k</math>. | ||

| + | |||

| + | The SOCR program computes <math>R_i</math> for each sample. The test statistic is defined for the following formulation of hypotheses: | ||

| + | |||

| + | : <math>H_o</math>: All of the k population distribution functions are identical. | ||

| + | : <math>H_1</math>: At least one of the populations tends to yield larger observations than at least one of the other populations. | ||

| + | |||

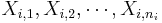

| + | Suppose {<math>X_{i,1}, X_{i,2}, \cdots, X_{i,n_i}</math>} represents the values of the <math>i^{th}</math> sample, where <math>1\leq i\leq k</math>. | ||

| + | |||

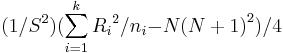

| + | : Test statistics: | ||

| + | :: T = <math>(1/{{S}^{2}}) (\sum_{i=1}^{k} {{R_i}^{2}} / {n_i} {-}{N {(N + 1)}^{2} }) / 4</math>, | ||

| + | where | ||

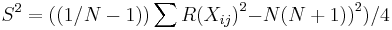

| + | ::<math>{{S}^{2}} = \left( \left({1/ {N - 1}}\right) \right) \sum{{R(X_{ij})}^{2}} {-} {N {\left(N + 1)\right)}^{2} } ) / 4</math>. | ||

| + | |||

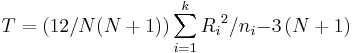

| + | * Note: If there are no ties, then the test statistic is reduced to: | ||

| + | ::<math>T = \left(12 / N(N+1) \right) \sum_{i=1}^{k} {{R_i}^{2}} / {n_i} {-} 3 \left(N+1\right)</math>. | ||

| + | |||

| + | However, the SOCR implementation allows for the possibility of having ties; so it uses the non-simplified, exact method of computation. | ||

| + | |||

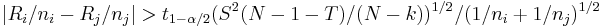

| + | Multiple comparisons have to be done here. For each pair of groups, the following is computed and printed at the '''Result''' Panel. | ||

| + | |||

| + | <math>|R_{i} /n_{i} -R_{j} /n_{j} | > t_{1-\alpha /2} (S^{2^{} } (N-1-T)/(N-k))^{1/2_{} } /(1/n_{i} +1/n_{j} )^{1/2_{}}</math>. | ||

| + | |||

| + | The SOCR computation employs the exact method instead of the approximate one (Conover 1980), since computation is easy and fast to implement and the exact method is somewhat more accurate. | ||

| + | |||

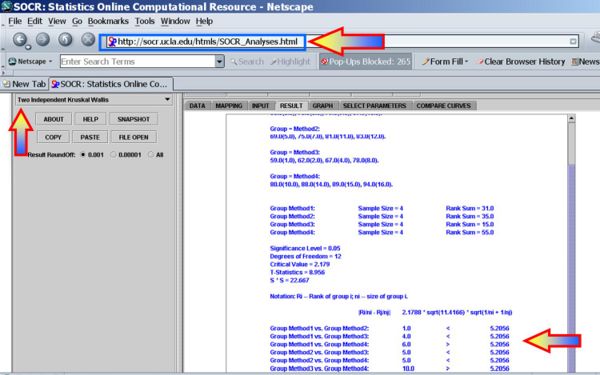

| + | ===The Kruskal-Wallis Test Using SOCR Analyses=== | ||

| + | It is much quicker to use [http://socr.ucla.edu/htmls/SOCR_Analyses.html SOCR Analyses] to compute the statistical significance of this test. This [[SOCR_EduMaterials_AnalysisActivities_KruskalWallis | SOCR KruskalWallis Test Activity]] may also be helpful in understanding how to use this test in SOCR. | ||

| + | |||

| + | For the teaching-methods example above, we can easily compute the statistical significance of the differences between the group medians (centers): | ||

| + | |||

| + | <center>[[Image:SOCR_EBook_Dinov_KruskalWallis_030108_Fig1.jpg|600px]]</center> | ||

| + | |||

| + | Clearly, there is only one significant group difference between medians, after the multiple testing correction, for the group1 vs. group4 comparison (see below): | ||

| + | |||

| + | : Group Method1 vs. Group Method2: 1.0 < 5.2056 | ||

| + | : Group Method1 vs. Group Method3: 4.0 < 5.2056 | ||

| + | : '''Group Method1 vs. Group Method4: 6.0 > 5.2056''' | ||

| + | : Group Method2 vs. Group Method3: 5.0 < 5.2056 | ||

| + | : Group Method2 vs. Group Method4: 5.0 < 5.2056 | ||

| + | : Group Method3 vs. Group Method4: 10.0 > 5.2056 | ||

| - | == | + | ==Practice Examples== |

TBD | TBD | ||

| - | + | ==Notes== | |

| + | * The [http://en.wikipedia.org/wiki/Friedman_test Friedman Fr Test] is the rank equivalent of the randomized block design alternative to the [[AP_Statistics_Curriculum_2007_ANOVA_2Way |Two-Way Analysis of Variance F Test]]. [[SOCR_EduMaterials_AnalysisActivities_Friedman | The SOCR Friedman Test Activity ]] demonstrates how to use [http://socr.ucla.edu/htmls/SOCR_Analyses.html SOCR Analyses] to compute the Friedman Test statistics and p-value. | ||

==References== | ==References== | ||

| - | + | Conover W (1980). Practical Nonparametric Statistics. John Wiley & Sons, New York, second edition. | |

<hr> | <hr> | ||

Current revision as of 21:00, 28 June 2010

Contents |

General Advance-Placement (AP) Statistics Curriculum - Means of Several Independent Samples

In this section we extend the multi-sample inference which we discussed in the ANOVA section, to the situation where the ANOVA assumptions are invalid. Hence we use a non-parametric analysis to study differences in centrality between two or more populations.

Motivational Example

Suppose four groups of students are randomly assigned to be taught with four different techniques, and their achievement test scores are recorded. Are the distributions of test scores the same, or do they differ in location? The data is presented in the table below.

| Teaching Method | ||||

| Method 1 | Method 2 | Method 3 | Method 4 | |

| Index | 65 | 75 | 59 | 94 |

| 87 | 69 | 78 | 89 | |

| 73 | 83 | 67 | 80 | |

| 79 | 81 | 62 | 88 | |

The small sample sizes and the lack of distribution information of each sample illustrate how ANOVA may not be appropriate for analyzing these types of data.

The Kruskal-Wallis Test

Kruskal-Wallis One-Way Analysis of Variance by ranks is a non-parametric method for testing equality of two or more population medians. Intuitively, it is identical to a One-Way Analysis of Variance with the raw data (observed measurements) replaced by their ranks.

Since it is a non-parametric method, the Kruskal-Wallis Test does not assume a normal population, unlike the analogous one-way ANOVA. However, the test does assume identically-shaped distributions for all groups, except for any difference in their centers (e.g., medians).

Calculations

Let N be the total number of observations, then  .

.

Let R(Xij) denotes the rank assigned to Xij and let Ri be the sum of ranks assigned to the ith sample.

-

.

.

The SOCR program computes Ri for each sample. The test statistic is defined for the following formulation of hypotheses:

- Ho: All of the k population distribution functions are identical.

- H1: At least one of the populations tends to yield larger observations than at least one of the other populations.

Suppose { } represents the values of the ith sample, where

} represents the values of the ith sample, where  .

.

- Test statistics:

- T =

,

,

- T =

where

.

.

- Note: If there are no ties, then the test statistic is reduced to:

.

.

However, the SOCR implementation allows for the possibility of having ties; so it uses the non-simplified, exact method of computation.

Multiple comparisons have to be done here. For each pair of groups, the following is computed and printed at the Result Panel.

.

.

The SOCR computation employs the exact method instead of the approximate one (Conover 1980), since computation is easy and fast to implement and the exact method is somewhat more accurate.

The Kruskal-Wallis Test Using SOCR Analyses

It is much quicker to use SOCR Analyses to compute the statistical significance of this test. This SOCR KruskalWallis Test Activity may also be helpful in understanding how to use this test in SOCR.

For the teaching-methods example above, we can easily compute the statistical significance of the differences between the group medians (centers):

Clearly, there is only one significant group difference between medians, after the multiple testing correction, for the group1 vs. group4 comparison (see below):

- Group Method1 vs. Group Method2: 1.0 < 5.2056

- Group Method1 vs. Group Method3: 4.0 < 5.2056

- Group Method1 vs. Group Method4: 6.0 > 5.2056

- Group Method2 vs. Group Method3: 5.0 < 5.2056

- Group Method2 vs. Group Method4: 5.0 < 5.2056

- Group Method3 vs. Group Method4: 10.0 > 5.2056

Practice Examples

TBD

Notes

- The Friedman Fr Test is the rank equivalent of the randomized block design alternative to the Two-Way Analysis of Variance F Test. The SOCR Friedman Test Activity demonstrates how to use SOCR Analyses to compute the Friedman Test statistics and p-value.

References

Conover W (1980). Practical Nonparametric Statistics. John Wiley & Sons, New York, second edition.

- SOCR Home page: http://www.socr.ucla.edu

Translate this page: