AP Statistics Curriculum 2007 Prob Rules

From Socr

(→Addition Rule) |

m (→Addition Rule) |

||

| Line 13: | Line 13: | ||

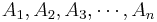

In general, for any ''n'', | In general, for any ''n'', | ||

:<math>P(\bigcup_{i=1}^n A_i) =\sum_{i=1}^n {P(A_i)} | :<math>P(\bigcup_{i=1}^n A_i) =\sum_{i=1}^n {P(A_i)} | ||

| - | -\sum_{i,j\,:\,i<j}{P(A_i\cap A_j)} +\sum_{i,j,k\,:\,i<j<k}{P(A_i\cap A_j\cap A_k)}+ \cdots\cdots\ +(-1)^{j+1} \sum_{i_1<i_2< \cdots < i_m}{P(\bigcap_{p=1}^m | + | -\sum_{i,j\,:\,i<j}{P(A_i\cap A_j)} +\sum_{i,j,k\,:\,i<j<k}{P(A_i\cap A_j\cap A_k)}+ \cdots\cdots\ +(-1)^{j+1} \sum_{i_1<i_2< \cdots < i_m}{P(\bigcap_{p=1}^m A_{i_p})}+ \cdots\cdots\ +(-1)^{n+1} P(\bigcap_{i=1}^n A_i).</math> |

===Conditional Probability=== | ===Conditional Probability=== | ||

Revision as of 21:48, 25 February 2008

Contents

|

General Advance-Placement (AP) Statistics Curriculum - Probability Theory Rules

Addition Rule

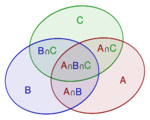

The probability of a union, also called the Inclusion-Exclusion principle allows us to compute probabilities of composite events represented as unions (i.e., sums) of simpler events.

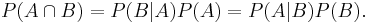

For events A1, ..., An in a probability space (S,P), the probability of the union for n=2 is

For n=3,

In general, for any n,

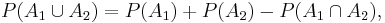

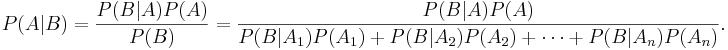

Conditional Probability

The conditional probability of A occurring given that B occurs is given by

Examples

Contingency table

Here is the data on 400 Melanoma (skin cancer) Patients by Type and Site

| Site | ||||

| Type | Head and Neck | Trunk | Extremities | Totals |

| Hutchinson's melanomic freckle | 22 | 2 | 10 | 34 |

| Superficial | 16 | 54 | 115 | 185 |

| Nodular | 19 | 33 | 73 | 125 |

| Indeterminant | 11 | 17 | 28 | 56 |

| Column Totals | 68 | 106 | 226 | 400 |

- Suppose we select one out of the 400 patients in the study and we want to find the probability that the cancer is on the extremities given that it is of type nodular: P = 73/125 = P(Extremities | Nodular)

- What is the probability that for a randomly chosen patient the cancer type is Superficial given that it appears on the Trunk?

Monty Hall Problem

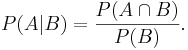

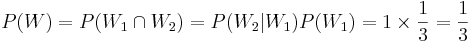

Recall that earlier we discussed the Monty Hall Experiment. We will now show why the odds of winning double if we use the swap strategy - that is the probability of a win is 2/3, if each time we switch and choose the last third card.

Denote W={Final Win of the Car Price}. Let L1 and W2 represent the events of choosing the donkey (loosing) and the car (winning) at the player's first and second choice, respectively. Then, the chance of winning in the swapping-strategy case is:

. If we played using the stay-home strategy, our chance of winning would have been:

. If we played using the stay-home strategy, our chance of winning would have been:

, or half the chance in the first (swapping) case.

, or half the chance in the first (swapping) case.

Drawing balls without replacement

Suppose we draw 2 balls at random, one at a time without replacement from an urn containing 4 black and 3 white balls, otherwise identical. What is the probability that the second ball is black? Sample Space? P({2-nd ball is black}) = P({2-nd is black} &{1-st is black}) + P({2-nd is black} &{1-st is white}) = 4/7 x 3/6 + 4/6 x 3/7 = 4/7.

Inverting the order of conditioning

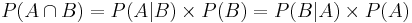

In many practical situations is is beneficial to be able to swap the event of interest and the conditioning event when we are computing probabilities. This can easily be accomplished using this trivial, yet powerful, identity:

Example - inverting conditioning

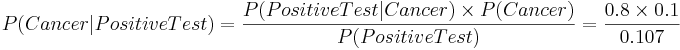

Suppose we classify the entire female population into 2 Classes: healthy(NC) controls and cancer patients. If a woman has a positive mammogram result, what is the probability that she has breast cancer?

Suppose we obtain medical evidence for a subject in terms of the results of her mammogram (imaging) test: positive or negative mammogram . If P(Positive Test) = 0.107, P(Cancer) = 0.1, P(Positive test | Cancer) = 0.8, then we can easily calculate the probability of real interest - what is the chance that the subject has cancer:

This equation has 3 known parameters and 1 unknown variable, so, we can solve for P(Cancer | Positive Test) to determine the chance the patient has breast cancer given that her mammogram was positively read. This probability, of course, will significantly influence the treatment action recommended by the physician.

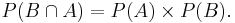

Statistical Independence

Events A and B are statistically independent if knowing whether B has occurred gives no new information about the chances of A occurring, i.e., if P(A | B) = P(A).

Note that if A is independent of B, then B is also independent of A, i.e., P(B | A) = P(B), since  .

.

If A and B are statistically independent, then

Multiplication Rule

For any two events (whether dependent or independent):

In general, for any collection of events:

Law of total probability

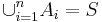

If { } form a partition of the sample space S (i.e., all events are mutually exclusive and

} form a partition of the sample space S (i.e., all events are mutually exclusive and  ) then for any event B

) then for any event B

- Example, if A1 and A2 partition the sample space (think of males and females), then the probability of any event B (e.g., smoker) may be computed by:

P(B) = P(B | A1)P(A1) + P(B | A2)P(A2). This of course is a simple consequence of the fact that  .

.

Bayesian Rule

If { } form a partition of the sample space S and A and B are any events (subsets of S), then:

} form a partition of the sample space S and A and B are any events (subsets of S), then:

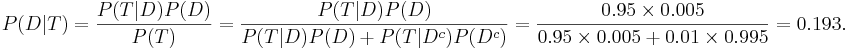

Example

Suppose a Laboratory blood test is used as evidence for a disease. Assume P(positive Test| Disease) = 0.95, P(positive Test| no Disease)=0.01 and P(Disease) = 0.005. Find P(Disease|positive Test)=?

Denote D = {the test person has the disease}, Dc = {the test person does not have the disease} and T = {the test result is positive}. Then

References

- SOCR Home page: http://www.socr.ucla.edu

Translate this page: